We have two ways of loading conversation with a previous history. Fork conversation and the experimental resume that we had before. In this PR, I am unifying their code path. The path is getting the history items and recording them in a brand new conversation. This PR also constraint the rollout recorder responsibilities to be only recording to the disk and loading from the disk. The PR also fixes a current bug when we have two forking in a row: History 1: <Environment Context> UserMessage_1 UserMessage_2 UserMessage_3 **Fork with n = 1 (only remove one element)** History 2: <Environment Context> UserMessage_1 UserMessage_2 <Environment Context> **Fork with n = 1 (only remove one element)** History 2: <Environment Context> UserMessage_1 UserMessage_2 **<Environment Context>** This shouldn't happen but because we were appending the `<Environment Context>` after each spawning and it's considered as _user message_. Now, we don't add this message if restoring and old conversation.

OpenAI Codex CLI

npm i -g @openai/codex

or brew install codex

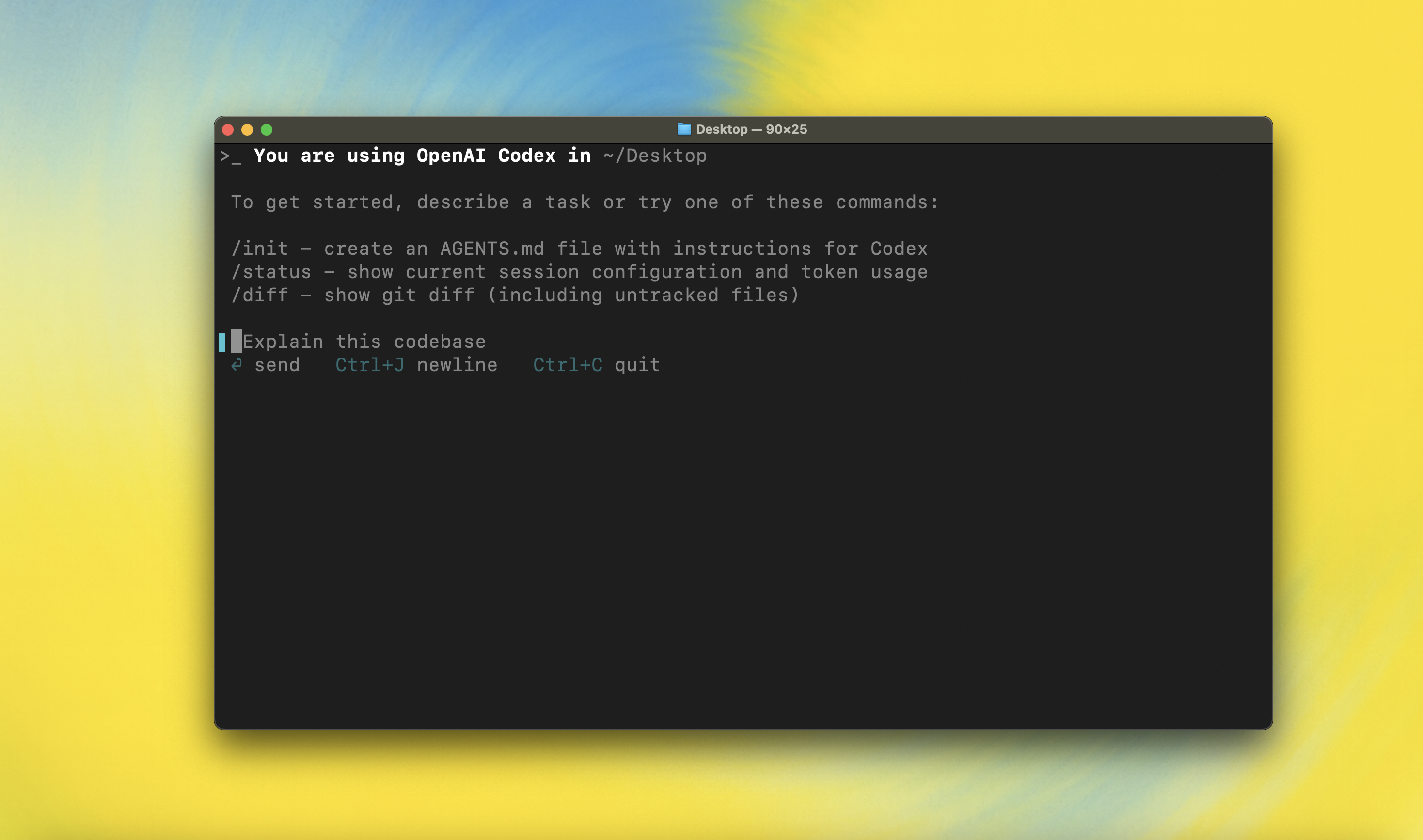

Codex CLI is a coding agent from OpenAI that runs locally on your computer.

If you are looking for the cloud-based agent from OpenAI, Codex Web, see chatgpt.com/codex.

Quickstart

Installing and running Codex CLI

Install globally with your preferred package manager. If you use npm:

npm install -g @openai/codex

Alternatively, if you use Homebrew:

brew install codex

Then simply run codex to get started:

codex

You can also go to the latest GitHub Release and download the appropriate binary for your platform.

Each GitHub Release contains many executables, but in practice, you likely want one of these:

- macOS

- Apple Silicon/arm64:

codex-aarch64-apple-darwin.tar.gz - x86_64 (older Mac hardware):

codex-x86_64-apple-darwin.tar.gz

- Apple Silicon/arm64:

- Linux

- x86_64:

codex-x86_64-unknown-linux-musl.tar.gz - arm64:

codex-aarch64-unknown-linux-musl.tar.gz

- x86_64:

Each archive contains a single entry with the platform baked into the name (e.g., codex-x86_64-unknown-linux-musl), so you likely want to rename it to codex after extracting it.

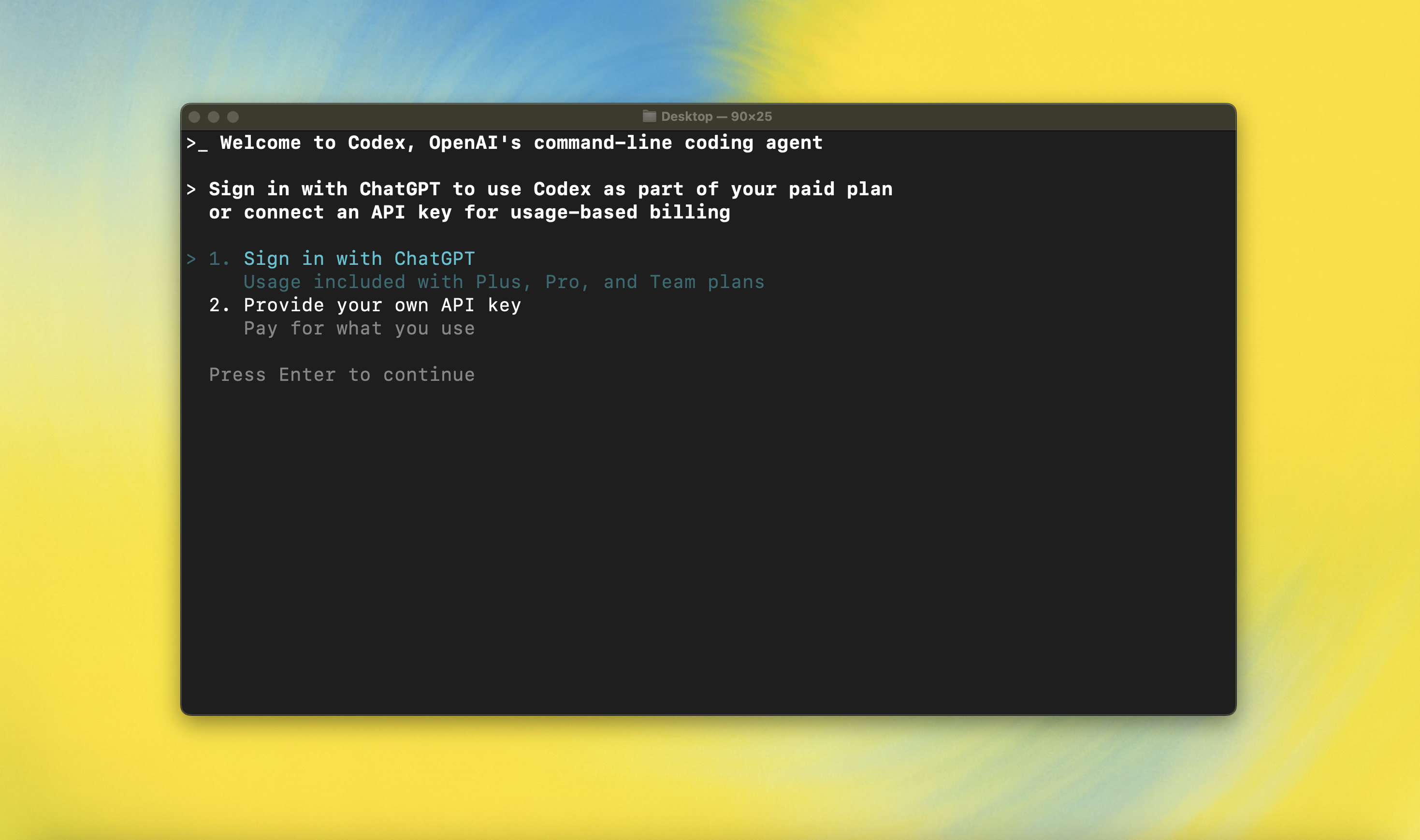

Using Codex with your ChatGPT plan

Run codex and select Sign in with ChatGPT. We recommend signing into your ChatGPT account to use Codex as part of your Plus, Pro, Team, Edu, or Enterprise plan. Learn more about what's included in your ChatGPT plan.

You can also use Codex with an API key, but this requires additional setup. If you previously used an API key for usage-based billing, see the migration steps. If you're having trouble with login, please comment on this issue.

Model Context Protocol (MCP)

Codex CLI supports MCP servers. Enable by adding an mcp_servers section to your ~/.codex/config.toml.

Configuration

Codex CLI supports a rich set of configuration options, with preferences stored in ~/.codex/config.toml. For full configuration options, see Configuration.

Docs & FAQ

- Getting started

- Sandbox & approvals

- Authentication

- Advanced

- Zero data retention (ZDR)

- Contributing

- Install & build

- FAQ

- Open source fund

License

This repository is licensed under the Apache-2.0 License.