This release represents a comprehensive transformation of the codebase from Codex to LLMX, enhanced with LiteLLM integration to support 100+ LLM providers through a unified API. ## Major Changes ### Phase 1: Repository & Infrastructure Setup - Established new repository structure and branching strategy - Created comprehensive project documentation (CLAUDE.md, LITELLM-SETUP.md) - Set up development environment and tooling configuration ### Phase 2: Rust Workspace Transformation - Renamed all Rust crates from `codex-*` to `llmx-*` (30+ crates) - Updated package names, binary names, and workspace members - Renamed core modules: codex.rs → llmx.rs, codex_delegate.rs → llmx_delegate.rs - Updated all internal references, imports, and type names - Renamed directories: codex-rs/ → llmx-rs/, codex-backend-openapi-models/ → llmx-backend-openapi-models/ - Fixed all Rust compilation errors after mass rename ### Phase 3: LiteLLM Integration - Integrated LiteLLM for multi-provider LLM support (Anthropic, OpenAI, Azure, Google AI, AWS Bedrock, etc.) - Implemented OpenAI-compatible Chat Completions API support - Added model family detection and provider-specific handling - Updated authentication to support LiteLLM API keys - Renamed environment variables: OPENAI_BASE_URL → LLMX_BASE_URL - Added LLMX_API_KEY for unified authentication - Enhanced error handling for Chat Completions API responses - Implemented fallback mechanisms between Responses API and Chat Completions API ### Phase 4: TypeScript/Node.js Components - Renamed npm package: @codex/codex-cli → @valknar/llmx - Updated TypeScript SDK to use new LLMX APIs and endpoints - Fixed all TypeScript compilation and linting errors - Updated SDK tests to support both API backends - Enhanced mock server to handle multiple API formats - Updated build scripts for cross-platform packaging ### Phase 5: Configuration & Documentation - Updated all configuration files to use LLMX naming - Rewrote README and documentation for LLMX branding - Updated config paths: ~/.codex/ → ~/.llmx/ - Added comprehensive LiteLLM setup guide - Updated all user-facing strings and help text - Created release plan and migration documentation ### Phase 6: Testing & Validation - Fixed all Rust tests for new naming scheme - Updated snapshot tests in TUI (36 frame files) - Fixed authentication storage tests - Updated Chat Completions payload and SSE tests - Fixed SDK tests for new API endpoints - Ensured compatibility with Claude Sonnet 4.5 model - Fixed test environment variables (LLMX_API_KEY, LLMX_BASE_URL) ### Phase 7: Build & Release Pipeline - Updated GitHub Actions workflows for LLMX binary names - Fixed rust-release.yml to reference llmx-rs/ instead of codex-rs/ - Updated CI/CD pipelines for new package names - Made Apple code signing optional in release workflow - Enhanced npm packaging resilience for partial platform builds - Added Windows sandbox support to workspace - Updated dotslash configuration for new binary names ### Phase 8: Final Polish - Renamed all assets (.github images, labels, templates) - Updated VSCode and DevContainer configurations - Fixed all clippy warnings and formatting issues - Applied cargo fmt and prettier formatting across codebase - Updated issue templates and pull request templates - Fixed all remaining UI text references ## Technical Details **Breaking Changes:** - Binary name changed from `codex` to `llmx` - Config directory changed from `~/.codex/` to `~/.llmx/` - Environment variables renamed (CODEX_* → LLMX_*) - npm package renamed to `@valknar/llmx` **New Features:** - Support for 100+ LLM providers via LiteLLM - Unified authentication with LLMX_API_KEY - Enhanced model provider detection and handling - Improved error handling and fallback mechanisms **Files Changed:** - 578 files modified across Rust, TypeScript, and documentation - 30+ Rust crates renamed and updated - Complete rebrand of UI, CLI, and documentation - All tests updated and passing **Dependencies:** - Updated Cargo.lock with new package names - Updated npm dependencies in llmx-cli - Enhanced OpenAPI models for LLMX backend This release establishes LLMX as a standalone project with comprehensive LiteLLM integration, maintaining full backward compatibility with existing functionality while opening support for a wide ecosystem of LLM providers. 🤖 Generated with [Claude Code](https://claude.com/claude-code) Co-Authored-By: Claude <noreply@anthropic.com> Co-Authored-By: Sebastian Krüger <support@pivoine.art>

npm i -g @valknar/llmx

or brew install --cask llmx

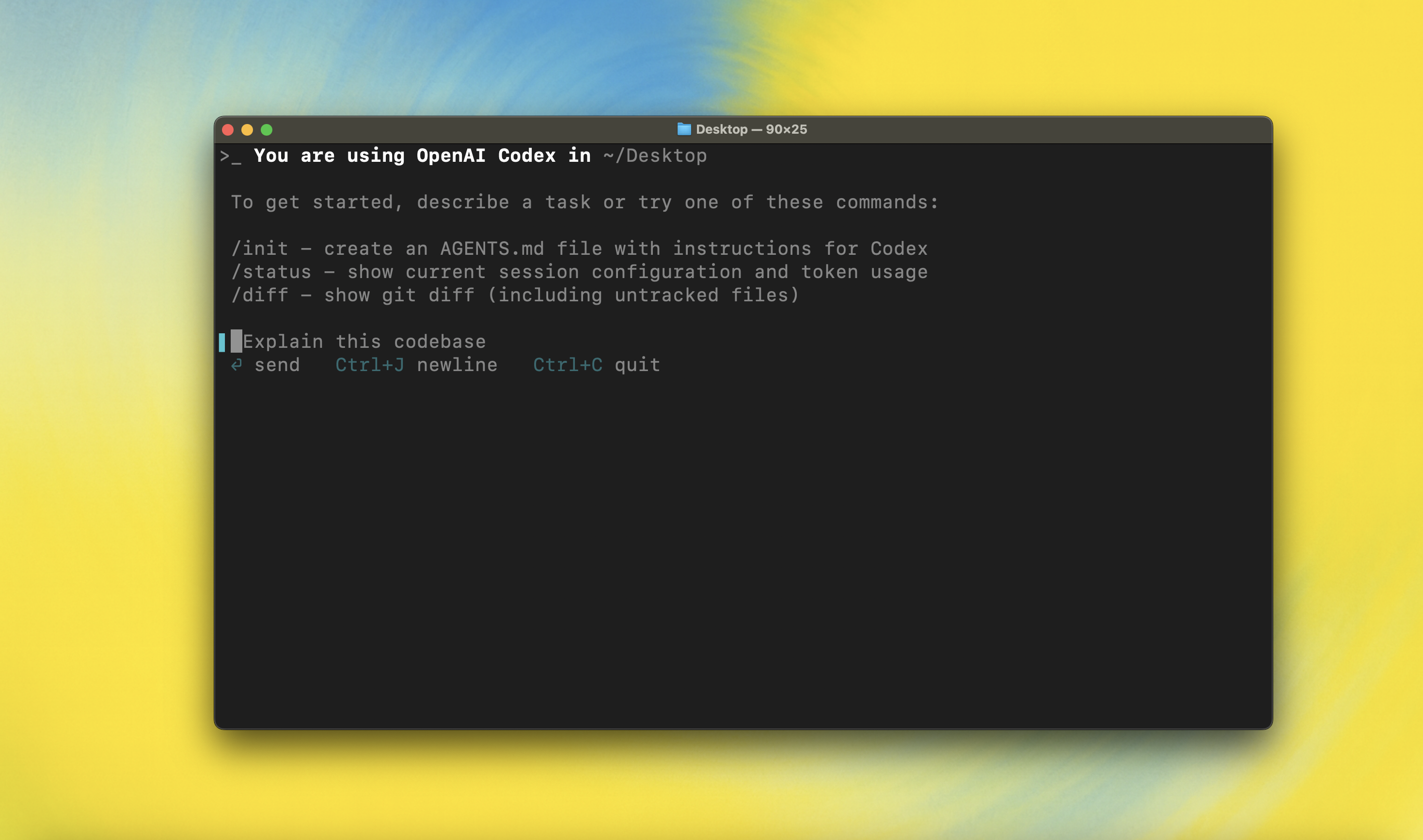

LLMX CLI is a coding agent powered by LiteLLM that runs locally on your computer.

This project is a community fork with enhanced support for multiple LLM providers via LiteLLM.

Original project: github.com/openai/llmx

Quickstart

Installing and running LLMX CLI

Install globally with your preferred package manager. If you use npm:

npm install -g @valknar/llmx

Alternatively, if you use Homebrew:

brew install --cask llmx

Then simply run llmx to get started:

llmx

If you're running into upgrade issues with Homebrew, see the FAQ entry on brew upgrade llmx.

You can also go to the latest GitHub Release and download the appropriate binary for your platform.

Each GitHub Release contains many executables, but in practice, you likely want one of these:

- macOS

- Apple Silicon/arm64:

llmx-aarch64-apple-darwin.tar.gz - x86_64 (older Mac hardware):

llmx-x86_64-apple-darwin.tar.gz

- Apple Silicon/arm64:

- Linux

- x86_64:

llmx-x86_64-unknown-linux-musl.tar.gz - arm64:

llmx-aarch64-unknown-linux-musl.tar.gz

- x86_64:

Each archive contains a single entry with the platform baked into the name (e.g., llmx-x86_64-unknown-linux-musl), so you likely want to rename it to llmx after extracting it.

Using LLMX with LiteLLM

LLMX is powered by LiteLLM, which provides access to 100+ LLM providers including OpenAI, Anthropic, Google, Azure, AWS Bedrock, and more.

Quick Start with LiteLLM:

# Set your LiteLLM server URL (default: http://localhost:4000/v1)

export LITELLM_BASE_URL="http://localhost:4000/v1"

export LITELLM_API_KEY="your-api-key"

# Run LLMX

llmx "hello world"

Configuration: See LITELLM-SETUP.md for detailed setup instructions.

You can also use LLMX with ChatGPT or OpenAI API keys. For authentication options, see the authentication docs.

Model Context Protocol (MCP)

LLMX can access MCP servers. To configure them, refer to the config docs.

Configuration

LLMX CLI supports a rich set of configuration options, with preferences stored in ~/.llmx/config.toml. For full configuration options, see Configuration.

Docs & FAQ

- Getting started

- Configuration

- Sandbox & approvals

- Authentication

- Automating LLMX

- Advanced

- Zero data retention (ZDR)

- Contributing

- Install & build

- FAQ

- Open source fund

License

This repository is licensed under the Apache-2.0 License.