cargo nextest to speed up CI builds (#3323)

I started looking at https://nexte.st/ because I was interested in a

test harness that lets a test dynamically declare itself "skipped,"

which would be a nice alternative to this pattern:

4c46490e53/codex-rs/core/tests/suite/cli_stream.rs (L22-L27)

ChatGPT pointed me at https://nexte.st/, which also claims to be "up to

3x as fast as cargo test." Locally, in `codex-rs`, I see

- `cargo nextest run` finishes in 19s

- `cargo test` finishes in 37s

Though looking at CI, the wins are quite as big, presumably because my

laptop has more cores than our GitHub runners (which is a separate

issue...). Comparing the [CI jobs from this

PR](https://github.com/openai/codex/actions/runs/17561325162/job/49878216246?pr=3323)

with that of a [recent open

PR](https://github.com/openai/codex/actions/runs/17561066581/job/49877342753?pr=3321):

| | `cargo test` | `cargo nextest` |

| ----------------------------------------------- | ------------ |

--------------- |

| `macos-14 - aarch64-apple-darwin` | 2m16s | 1m51s |

| `macos-14 - aarch64-apple-darwin` | 5m04s | 3m44s |

| `ubuntu-24.04 - x86_64-unknown-linux-musl` | 2m02s | 1m56s |

| `ubuntu-24.04-arm - aarch64-unknown-linux-musl` | 2m01s | 1m35s |

| `windows-latest - x86_64-pc-windows-msvc` | 3m07s | 2m53s |

| `windows-11-arm - aarch64-pc-windows-msvc` | 3m10s | 2m45s |

I thought that, to start, we would only make this change in CI before

declaring it the "official" way for the team to run the test suite.

Though unfortunately, I do not believe that `cargo nextest` _actually_

supports a dynamic skip feature, so I guess I'll have to keep looking?

Some related discussions:

- https://internals.rust-lang.org/t/pre-rfc-skippable-tests/14611

- https://internals.rust-lang.org/t/skippable-tests/21260

OpenAI Codex CLI

npm i -g @openai/codex

or brew install codex

Codex CLI is a coding agent from OpenAI that runs locally on your computer.

If you are looking for the cloud-based agent from OpenAI, Codex Web, see chatgpt.com/codex.

Quickstart

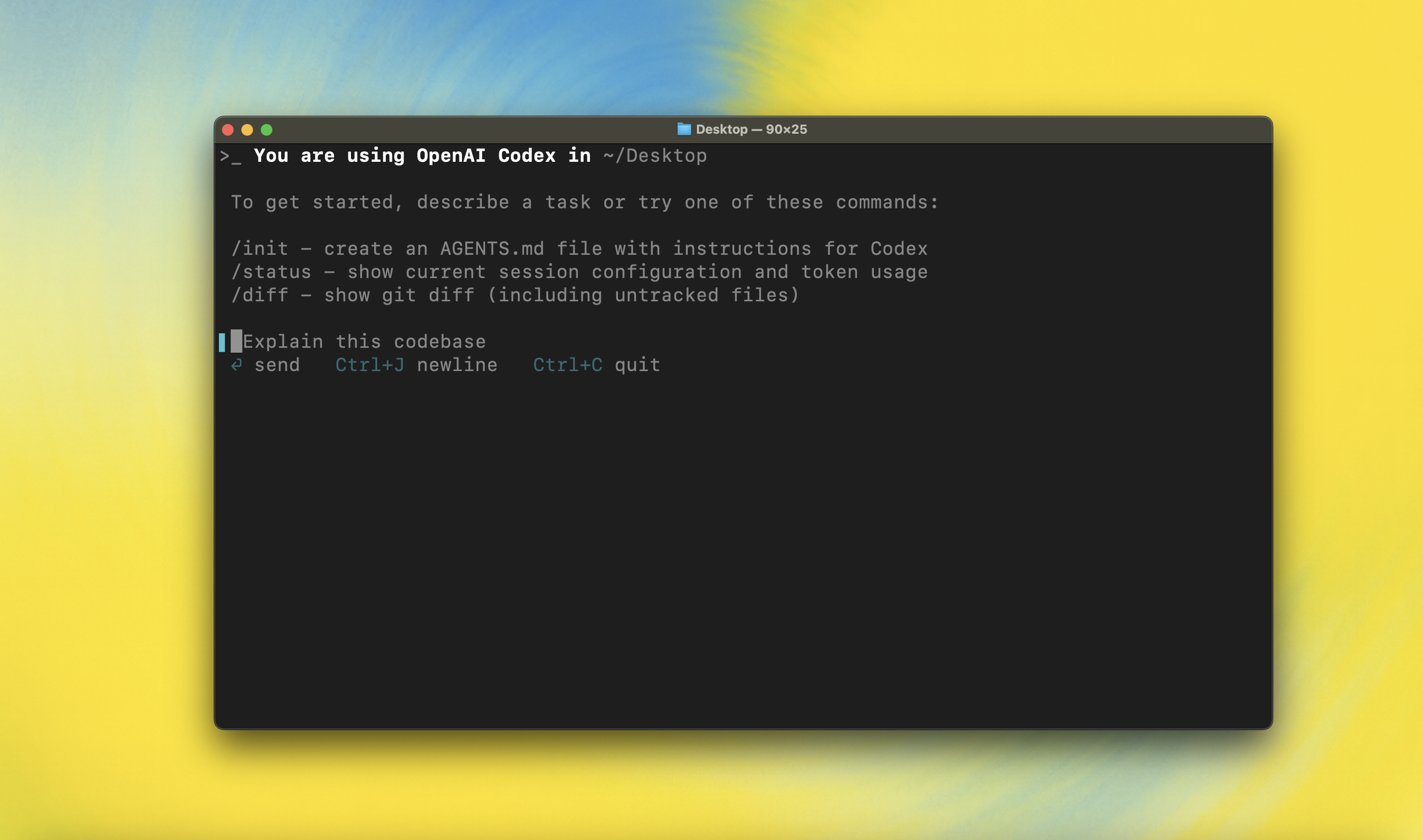

Installing and running Codex CLI

Install globally with your preferred package manager. If you use npm:

npm install -g @openai/codex

Alternatively, if you use Homebrew:

brew install codex

Then simply run codex to get started:

codex

You can also go to the latest GitHub Release and download the appropriate binary for your platform.

Each GitHub Release contains many executables, but in practice, you likely want one of these:

- macOS

- Apple Silicon/arm64:

codex-aarch64-apple-darwin.tar.gz - x86_64 (older Mac hardware):

codex-x86_64-apple-darwin.tar.gz

- Apple Silicon/arm64:

- Linux

- x86_64:

codex-x86_64-unknown-linux-musl.tar.gz - arm64:

codex-aarch64-unknown-linux-musl.tar.gz

- x86_64:

Each archive contains a single entry with the platform baked into the name (e.g., codex-x86_64-unknown-linux-musl), so you likely want to rename it to codex after extracting it.

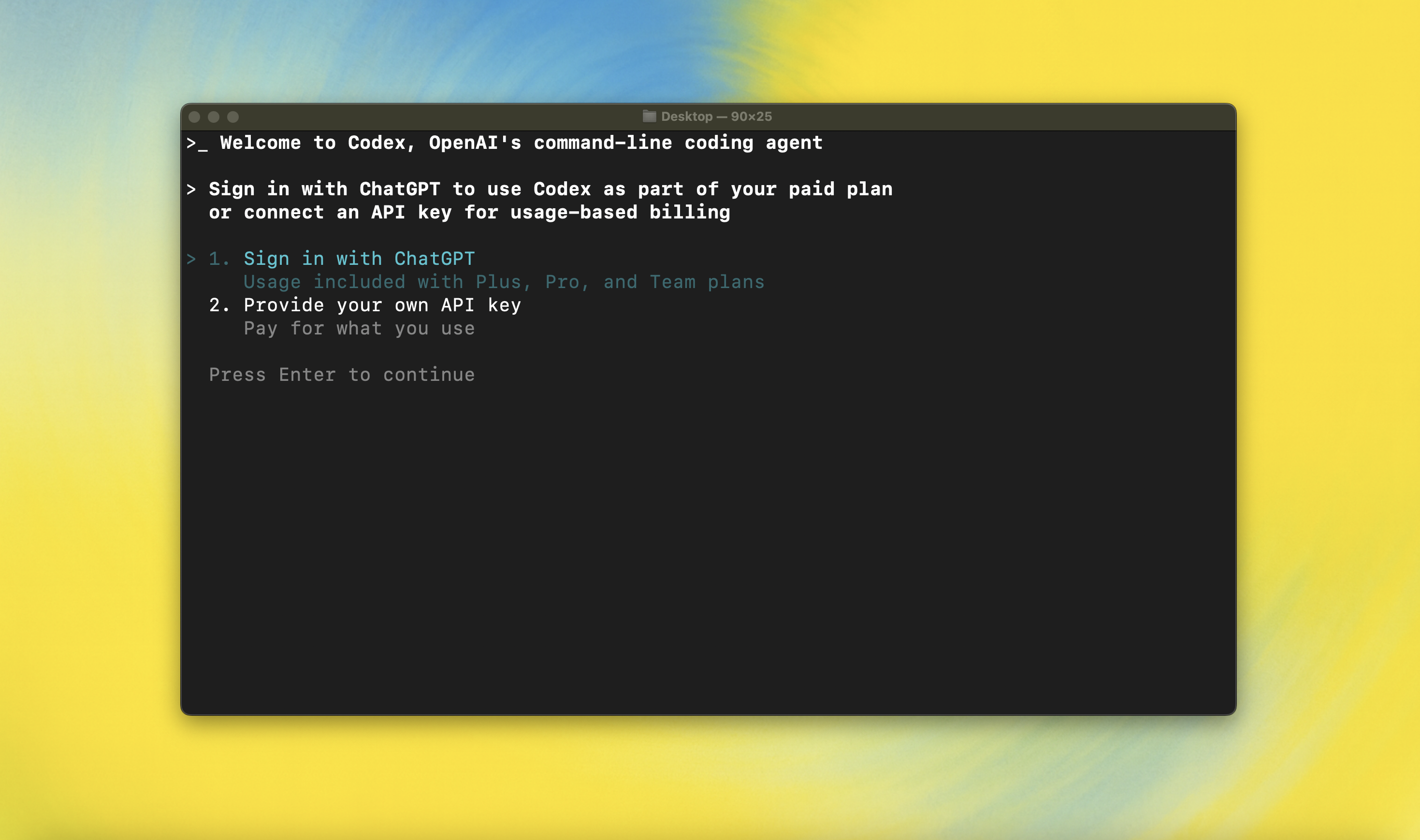

Using Codex with your ChatGPT plan

Run codex and select Sign in with ChatGPT. We recommend signing into your ChatGPT account to use Codex as part of your Plus, Pro, Team, Edu, or Enterprise plan. Learn more about what's included in your ChatGPT plan.

You can also use Codex with an API key, but this requires additional setup. If you previously used an API key for usage-based billing, see the migration steps. If you're having trouble with login, please comment on this issue.

Model Context Protocol (MCP)

Codex CLI supports MCP servers. Enable by adding an mcp_servers section to your ~/.codex/config.toml.

Configuration

Codex CLI supports a rich set of configuration options, with preferences stored in ~/.codex/config.toml. For full configuration options, see Configuration.

Docs & FAQ

- Getting started

- Sandbox & approvals

- Authentication

- Advanced

- Zero data retention (ZDR)

- Contributing

- Install & build

- FAQ

- Open source fund

License

This repository is licensed under the Apache-2.0 License.